Call for Artifacts

We warmly invite all authors of papers accepted at ISSRE 2026, including Research Track (REG, PER, and TAR papers) and Industry Track (full papers only), to submit their artifacts for Artifact Evaluation (AE). By doing so, you show the reproducibility, transparency, and technical excellence of your work, helping illuminate the path toward advancement and rigor in our community.

Participating in AE also offers the opportunity to earn official badges to be displayed alongside your paper in the conference proceedings, highlighting the credibility and quality of your research contributions. Furthermore, all submitted artifacts will be considered for the ISSRE 2026 Best Artifact Award, recognizing outstanding efforts in delivering reusable, well-documented, and impactful research artifacts.

Each artifact submission will be carefully evaluated by at least three members of the Artifact Evaluation Committee (AEC), ensuring a thorough, fair, and constructive review process.

Before submitting your artifact, please review the information below. If you have any questions or concerns, you can contact the AE chairs.

Understanding Artifacts

Artifacts are digital assets that form the backbone of your research, including code, datasets, models, scripts, configuration files, or other materials developed to support your study or produced during experimentation. The Artifact Evaluation (AE) process promotes the transparent dissemination of these materials, advancing open science practices and strengthening the reproducibility and impact of research results.

In the domain of software reliability, artifacts may include tools, datasets (e.g., logs, traces, or raw experimental data), or a combination of both. For example, an Artifact Evaluation Package may consist of an executable tool accompanied by representative sample data that demonstrates its capabilities and supports the claims made in the paper.

While authors are strongly encouraged to release the source code of their tools to maximize transparency and reuse, submitting executable binaries is also acceptable. If you are uncertain whether your artifact is suitable for the AE process, we warmly encourage you to contact the AE Chairs for clarification and guidance.

Objectives of Evaluation and Badges

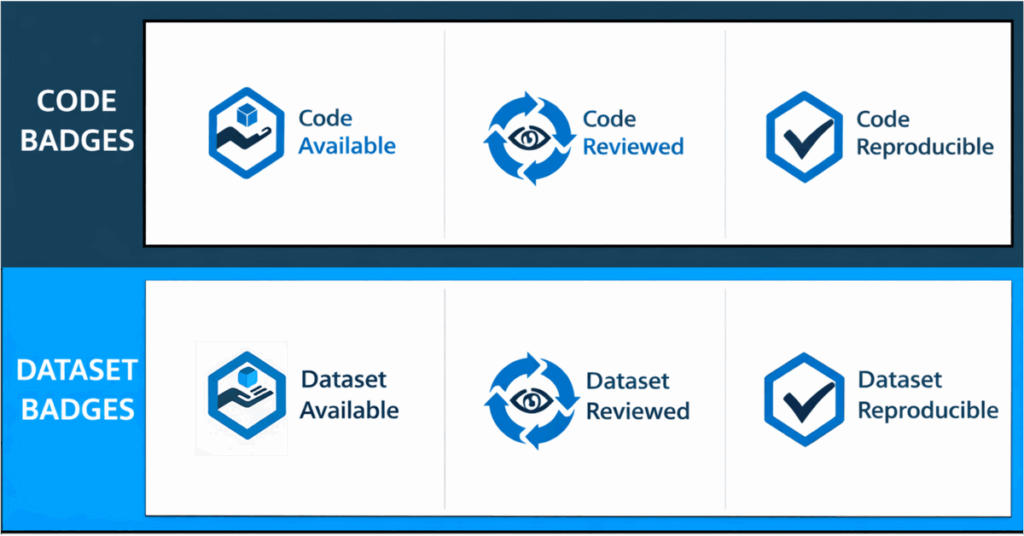

The artifact evaluation process will consider awarding the following IEEE reproducibility badges in two different categories:

Each paper can be considered for Code and/or Dataset badging categories and can earn up to 3 badges (Available, Reviewed, and Reproducible).

- Available: This badge signals that author-created digital objects used in the research (including data and/or code) are permanently archived in a public repository that assigns a global identifier (DOI) and guarantees persistence and are made available via standard open licenses that maximize artifact availability.

- Reviewed: This badge signals that all relevant author-created digital objects used in the research (including data and code) were reviewed according to the criteria provided by the badge issuer.

- Reproducible: This badge signals that an additional step was taken to certify that an independent party has regenerated computational results using the authorcreated research objects, methods, code, and conditions of analysis.

To earn the Reviewed badge, authors must also obtain the Available badge. Similarly, to earn the Reproducible badge, authors must obtain both the Reviewed and Available badges. Further details will be provided in the subsequent information.

How to Prepare and Submit an Artifact?

Your artifact should contain a README file, with a .txt extension. The README file should include eight elements:

- TARGET CATEGORY: Code and/or Dataset

- TARGET BADGE: Available, Reviewed, or Reproducible

- INFO: Title of the accepted paper, its submission ID, and authors information (name, affiliations, and emails)

- EXPECTED BEHAVIOUR: what is the artifact intended to do, including a description of its scope and the output it will provide.

- ARTIFACT DESCRIPTION: Explain the content of the artifact, detailing the structure of folders, files, and any other relevant information.

- ENVIRONMENT SETUP: Specify the requirements to run the artifact. This includes minimum RAM, or CPU. If your artifact depends on a specific operating system, you are required to provide a suitable virtualization environment. In any case, we strongly recommend providing a Docker image for ease of access. In such a case, you also need to provide the information on how to run the Docker image.

- GETTING STARTED: Describe how to set up the artifact and validate its general functionality based on a small example data. This setup and execution should ideally take no more than 30 minutes.

- REPRODUCIBILITY: Describes how to reproduce the paper’s claims and results in detail.

As a guideline, we recommend that you prepare your submission such that set-up, execution and analysis can be completed within 4 hours.

The inclusion of all sections depends on the type of artifact you are submitting and the badges you are requesting for review. Specifically, the Getting Started section is required for the Reviewed and Reproducible badges, and the Reproducibility Instructions section is required for the Reproducible badge.

Note that it is requested you include a LICENSE file that contains an open-source license, which clearly describes the distribution rights for your project.

Once you have prepared your artifact, including both the required README file and LICENSE file, upload it to Zenodo or a similar service (e.g., figshare) to acquire a DOI. This DOI should be included in both the camera-ready version of your paper and during the artifact submission.

To submit the artifact for evaluation, use the EasyChair platform. You must upload a copy of the accepted paper that includes a cover page at the beginning, clearly indicating the DOI for accessing the artifact, where the README file MUST be included and clearly contain all the previously requested information.

More information about the badges and the best artifact awards follows.

Available Badge

To qualify for the Available badge, your artifact must be accessible through a hosting platform. While Zenodo is commonly used and recommended for its reliability and integration with scholarly practices, authors are free to choose the platform that best suits their needs. Ensure that your artifact is self-contained and versioned during the upload process. Upon upload to your chosen platform, a DOI will be generated, which is essential for artifact evaluation submission.

Kindly note that once submitted, your artifact will be immediately accessible to the public and cannot be modified or deleted. However, you retain the option to upload an updated version, which will be assigned a new DOI (e.g., to address reviewer feedback). Furthermore, please ensure that your artifact is accompanied by a LICENSE file, clearly outlining the distribution rights. To be eligible for badges such as ‘Available’ or higher, the file must incorporate an open-source license.

Reviewers will validate the authenticity of the DOI and LICENSE file, and then assess the clarity and comprehensiveness of your artifact and README file.

Reviewed Badge

Reviewers will evaluate your artifact’s fundamental functionality based on the instructions provided in the “Getting Started” section of the README file. The Reviewed Badge will be awarded if reviewers can successfully set up the artifact and verify its basic functionality using a small sample dataset within 30 minutes.

Reproducible Badge

Earning the Reproducible badge entails an additional level of scrutiny. Reviewers will reproduce computational results using your research objects, methods, code, and analysis conditions. Therefore, the inclusion of a Reproducibility Instructions section is imperative for this badge. Reviewers will conduct a thorough assessment to validate your artifact’s support for the paper’s primary claims.

Please note that if reproducing results requires extensive computation or substantial computational resources, reviewers may exercise discretion in running experiments. If obtaining results necessitates significant computation time (e.g., days or months), reviewers may not be able to reproduce them fully.

It is crucial to emphasize that clear, detailed documentation and instructions are essential for badge certification.

Should you require further clarification or assistance, please feel free to contact the AEC Chairs.

2026 ISSRE Best Artifact Awards

In recognition of the authors’ dedicated efforts in creating and disseminating exceptional research artifacts, the 2026 ISSRE Best Artifact Award will be presented. This award celebrates the outstanding contributions made by authors to advance the field through the creation and dissemination of remarkable research artifacts.

Evaluation procedure

Artifacts will be evaluated by the Artifact Evaluation Committee. Committee members may contact the authors in case of doubts, to clarify possible open issues with the execution of the artifacts. In any circumstances, no amendments to the declared procedures and the provided delivery are accepted, while clarifications are only intended to avoid factual mistakes by the Evaluation Committee.

Composition of the Evaluation Committee

The Evaluation Committee is open for candidates. If you wish to join the Evaluation Committee, please contact the AE Chairs. Consider that the Evaluation Committee will be mostly active between the end of August and in September, and that we expect each member will be assigned 2 artifacts for review.

Important Dates (AoE)

- Artifact Evaluation Submission: August 19, 2026

- Artifact Evaluation Notification: September 15, 2026

Submission Page

Artifacts are submitted via Easychair at the following URL:

Artifact Evaluation Chairs

- Naghmeh Ivaki, University of Coimbra, Portugal (naghmeh@dei.uc.pt)

- Fumio Machida, University of Tsukuba, Japan (machida@cs.tsukuba.ac.jp)